![[FA] SIT One SITizen Alumni Initiative_Web banner_1244px x 688px.jpg](/sites/default/files/2024-12/%5BFA%5D%20%20SIT%20One%20SITizen%20Alumni%20Initiative_Web%20banner_1244px%20x%20688px.jpg)

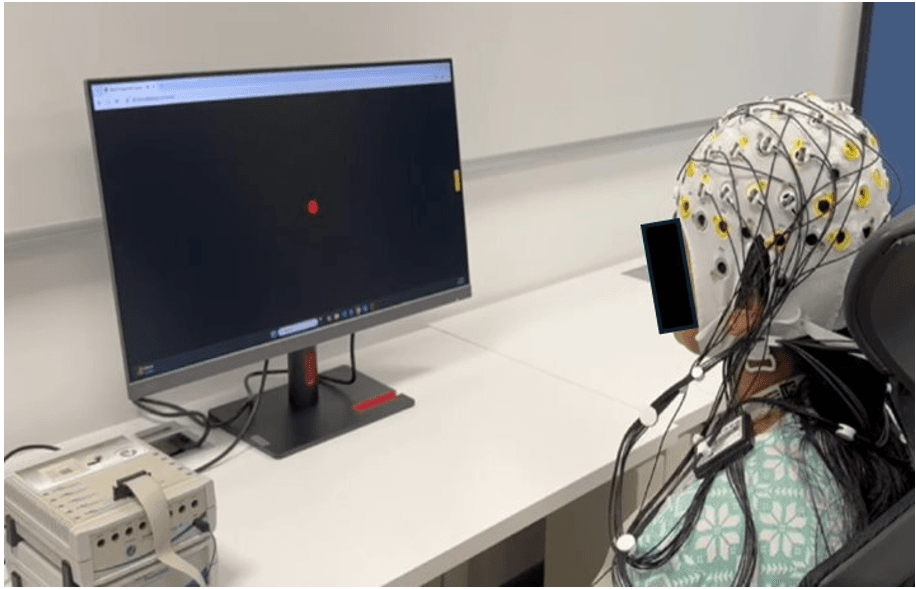

Non-invasive Online-self-correcting Closed-loop Brain Computer Interface for Decoding and Control of Motor Imagery Hand Movement Kinematics

The project aims to develop an online, closed-loop, self-correcting Motor Imagery-based Brain-Computer Interface (MI-BCI) to decode imagined hand movement direction and speed.

Using error detection, the system adapts to improve decoding accuracy over time.

The framework will be validated on both healthy participants and stroke patients to ensure reliability for neurorehabilitation applications.

Project Deliverables/Outcomes/Impact:

- A BCI that enables more natural control of motor activities with increased accuracy and robustness.

- Improved standard of care of stroke patients through BCI-based motor rehabilitation.