![[FA] SIT One SITizen Alumni Initiative_Web banner_1244px x 688px.jpg](/sites/default/files/2024-12/%5BFA%5D%20%20SIT%20One%20SITizen%20Alumni%20Initiative_Web%20banner_1244px%20x%20688px.jpg)

Multimodal Visual Acuity Testing with Speech and Touch Panel

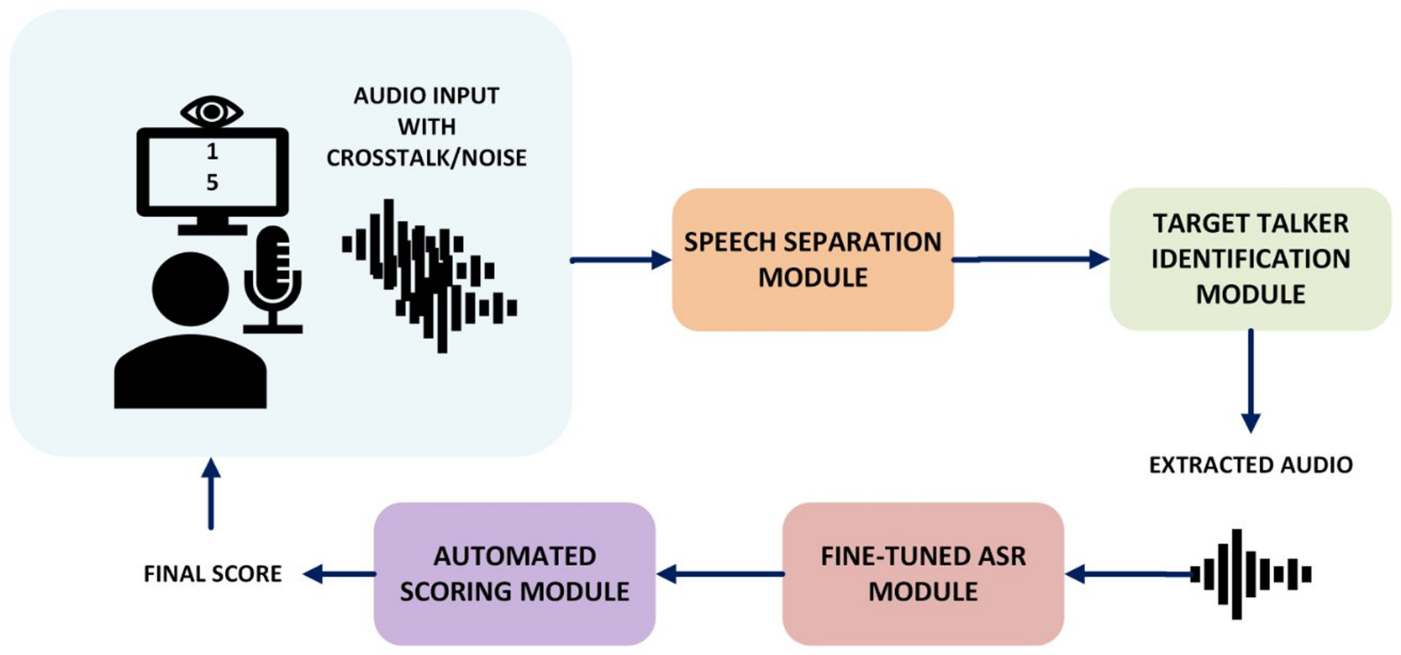

In this project, the team developed a novel automated visual acuity testing system that enables users to interact using natural speech. The system employs a multimodal approach, integrating both speech and image recognition to enhance efficiency and user experience.

Problem statement:

Visual acuity (VA) testing often serves as the initial step in eye clinic workflows. Since this process is typically repeated at each patient visit, the traditional one-on-one approach can become a bottleneck in clinical operations.

Solution and Notable Contribution:

The team addressed the following three key aspects:

- Fine-tuning the ASR model to accurately characterise the Singaporean accent.

- Implementing noise cancellation and target speaker identification to handle noisy clinical environments.

- Developing a multimodal solution to effectively address the issue of cross-talk.

Publications:

Boon Peng Yap, Michael Kok Liang Tan, Zhenghao Li, Rong Tong, Speech Enabled Visual Acuity Test, Interspeech 2024

Akshita Abrol, Ridwan Arefeen, Kelvin Zhenghao Li, Zhengkui Wang, Rong Tong, Real-Time Speech Recognition for Noisy Multi-Speaker Clinical Environments, to appear in IALP 2025